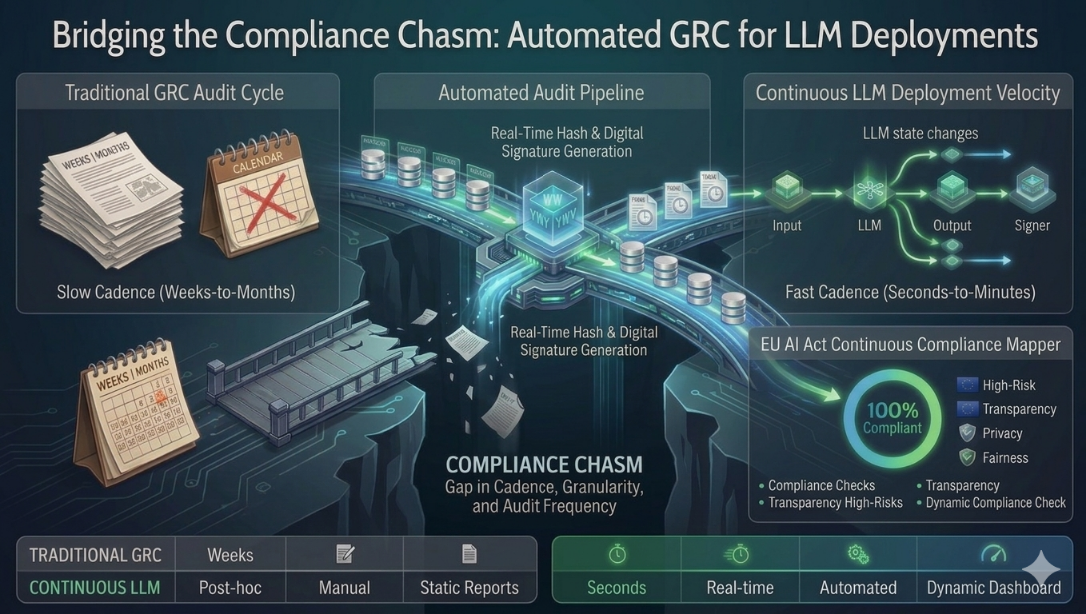

The compliance function was never designed to move at the speed of machine learning. Traditional GRC frameworks were built around a world where new systems were deployed quarterly, audited annually, and changed predictably. Large Language Models broke every one of those assumptions. An LLM in production can process millions of decisions per day, drift from its validated behaviour without warning, and interact with sensitive data in ways that no static policy document anticipated. The gap between what compliance frameworks require and what AI systems actually do in deployment is the compliance chasm — and most organisations have no systematic plan to cross it.

Why Manual Compliance Breaks at LLM Scale

Manual GRC processes have three structural failure modes when applied to LLM deployments. The first is velocity mismatch — a compliance review cycle that takes four weeks cannot govern a system making four million decisions per day. By the time the audit is complete, the system has already produced the behaviour the audit was designed to detect. The second is interpretability blindness — traditional compliance evidence relies on documented decision logic. LLMs do not have documented decision logic; they have probabilistic weights, and those weights produce different outputs for semantically similar inputs. The third is drift invisibility — a model that passed validation today may behave materially differently in 60 days as the distribution of real-world inputs shifts, with no external signal that anything has changed.

Automating the Audit Pipeline

Automated GRC for LLM deployments is not the replacement of human oversight — it is the infrastructure that makes human oversight tractable at scale. The goal is to move compliance evidence generation from a periodic manual exercise to a continuous automated process, so that human reviewers are directing their attention to anomalies and decisions rather than assembling documentation.

A production-grade automated audit pipeline for LLMs has five components. Input logging captures every prompt entering the system with timestamps, user context, and session metadata — immutably, with no possibility of retroactive modification. Output logging captures every model response alongside the model version, temperature settings, and any system prompt in effect at the time. Behavioural monitoring runs continuous statistical analysis against baseline distributions to detect drift before it becomes a compliance event. Policy enforcement applies configurable rule sets — content policies, data handling rules, jurisdictional constraints — at inference time, blocking or flagging non-compliant outputs in real time rather than post hoc. Automated reporting synthesises the above into audit-ready evidence packages that map directly to the requirements of applicable regulatory frameworks.

ViriSIM: Real-Time GRC in Practice

ViriSIM is Virideed's GRC automation layer for enterprise LLM deployments. It intercepts inference traffic, extracts and flags non-compliant outputs, and feeds violation patterns back into two active remediation paths — fine-tuning pipelines that correct model behaviour at the weights level, and pre-generation guardrails derived from flagged logs that can be injected upstream to prevent violations before inference occurs. The result is a closed-loop compliance architecture that gets more precise as the model operates, not a static monitor that reports after the fact.

- Non-compliant log extraction & flagging: ViriSIM identifies, extracts, and surfaces non-compliant inference events in real time — giving compliance teams actionable violation records rather than raw log exports.

- Model fine-tuning feedback loop: Flagged non-compliant logs are fed back into fine-tuning pipelines, correcting model behaviour at the weights level so the same violation pattern does not recur.

- Pre-generation guardrail generation: ViriSIM generates guardrails directly from flagged violation patterns and delivers them as injectable pre-generation constraints — preventing non-compliant outputs before inference rather than filtering them after.

- Continuous drift detection: Statistical monitoring against validated baseline distributions, with configurable alert thresholds and automated escalation workflows when behavioural drift exceeds acceptable tolerances.

- Immutable I/O logging: Append-only, cryptographically signed logs of every inference event — the evidentiary foundation for any regulatory enquiry or internal audit.

- Framework-mapped reporting: Automated generation of compliance evidence packages pre-mapped to EU AI Act Articles 9, 13, and 17, NIST AI RMF core functions, and ISO 42001 controls — eliminating the manual framework-mapping workload entirely.

"The question is no longer whether your AI system is compliant at launch. The question is whether your organisation can prove it remained compliant every minute since launch."

Regulatory Alignment

The three regulatory frameworks most relevant to enterprise LLM compliance each impose distinct but overlapping requirements that automated GRC must satisfy simultaneously. The EU AI Act requires ongoing monitoring, incident logging, and human oversight mechanisms for high-risk systems — with documentation that must be available to national supervisory authorities on demand. The NIST AI RMF maps to four core functions — Govern, Map, Measure, Manage — each requiring continuous rather than periodic activity. ISO 42001, the emerging international standard for AI management systems, requires evidence of systematic risk management and performance monitoring across the full AI lifecycle.

An automated GRC pipeline that generates continuous, framework-mapped evidence satisfies all three simultaneously. An organisation relying on periodic manual audits satisfies none of them adequately — because all three frameworks, in their current form, implicitly or explicitly require ongoing monitoring that manual processes cannot deliver at LLM deployment scale.

Closing the Chasm

The compliance chasm will not close itself. Every new LLM deployment without automated GRC infrastructure widens it — adding more decisions, more drift risk, and more regulatory exposure to a gap that periodic audits cannot bridge. The organisations closing the chasm now are not doing so because they are more risk-averse than their competitors. They are doing so because they recognise that the cost of building automated compliance infrastructure today is a fraction of the cost of reconstructing compliance evidence after a regulatory inquiry has already begun.

Key Takeaways

- Traditional GRC frameworks have three structural failure modes at LLM scale: velocity mismatch, interpretability blindness, and drift invisibility.

- Automated GRC moves compliance evidence generation from a periodic manual exercise to a continuous automated process — making human oversight tractable at scale.

- The audit pipeline must be architecturally separate from the model infrastructure it monitors. Compliance infrastructure that depends on the system it governs is a liability, not a control.

- EU AI Act, NIST AI RMF, and ISO 42001 all require ongoing monitoring — not point-in-time certification. Only automated pipelines can satisfy this continuously.

- ViriSIM delivers a closed-loop GRC architecture — extracting and flagging non-compliant logs, feeding violations into fine-tuning pipelines, generating injectable pre-generation guardrails, and producing framework-mapped compliance evidence continuously.