When a regulator asks an organisation to prove that its generative AI system behaved appropriately on a specific date, most organisations discover they cannot answer the question. Not because the system misbehaved — but because nobody built the infrastructure to record what it did. Generative AI systems are high-throughput, probabilistic, and stateful in ways that traditional application logging was never designed to capture. An audit trail that records only errors, or only user-facing outputs, or only system events, is not an audit trail for a generative AI system. It is a partial record with gaps that a regulator will correctly interpret as an absence of governance.

What Immutability Actually Means

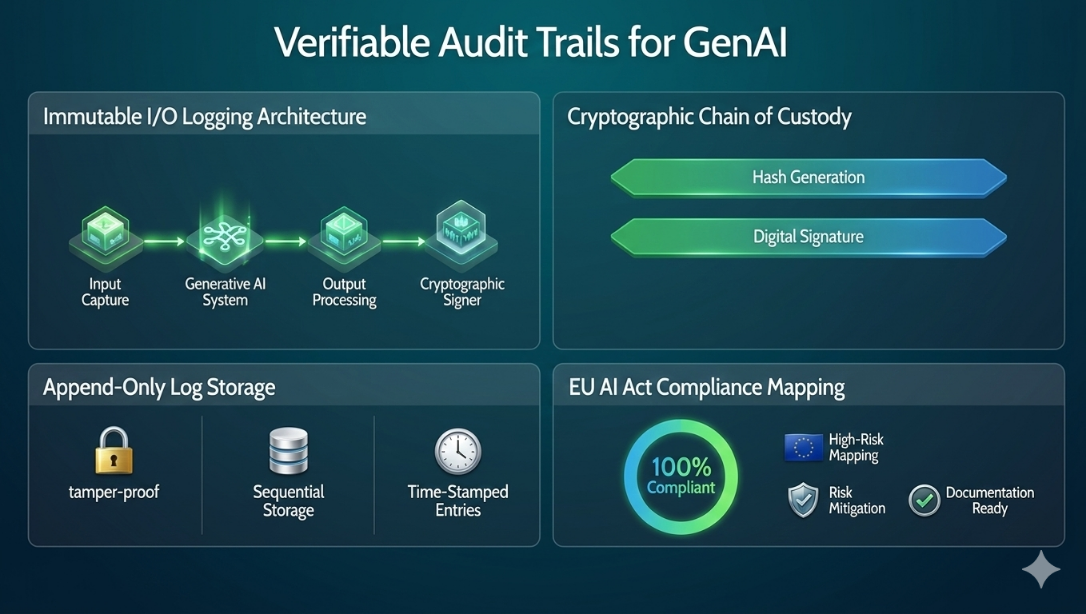

Immutability in audit logging is not a storage property — it is a trust property. A log stored in a read-only database is not immutable; it is write-protected until someone with administrative access decides otherwise. True immutability means that the log record, once written, cannot be modified, deleted, or backdated in any way that is not itself detectable. This requires a cryptographic architecture, not just a storage policy.

The practical standard for immutable audit logs in regulated AI systems is an append-only log where each entry is cryptographically signed at write time, and where each entry's signature includes a hash of the previous entry — creating a chain where any retroactive modification invalidates every subsequent record. This structure means a regulator can verify not just that a specific log entry exists, but that the entire sequence of log entries is intact and unmodified. The log becomes evidence, not just documentation.

Designing the I/O Logging Layer

A complete I/O logging layer for a generative AI system must capture six categories of data for every inference event.

Request metadata: Timestamp, session identifier, user or service identity, and the API endpoint or interface through which the request was received.

Input content: The full prompt as submitted, including any system prompt, context window contents, and retrieval-augmented content injected at runtime.

Model state: The model version, temperature, top-p, and any other sampling parameters in effect at the time of inference.

Output content: The full model response, not a truncated or summarised version.

Policy enforcement events: Any rules triggered, content filtered, or outputs modified by the policy layer before delivery to the requester.

Latency and resource metrics: Inference duration and token counts, which are required for capacity and cost attribution but are also operationally relevant to compliance in systems with response-time SLAs.

- Capture at the right layer: Logging must occur at the inference gateway, not at the application layer. Application-layer logging can be bypassed by direct API calls; gateway-layer logging cannot.

- Never log to the model's own storage: Audit logs must be written to infrastructure that is independent of and inaccessible to the model runtime. A model that can read its own audit log is a model that can be prompted to reason about suppressing it.

- Preserve raw inputs: Pre-processing pipelines that clean, truncate, or transform inputs before inference must log the original input, not the processed version. The regulator needs to see what the user actually sent.

- Handle high throughput without sampling: Audit logs for generative AI must be complete — not sampled. A log that captures 10% of inference events is a log that misses 90% of the events a regulator might ask about.

"An audit trail that a system administrator can edit is a liability document, not a compliance document. The moment you need it most is the moment it will have been modified."

Cryptographic Chain of Custody

The cryptographic chain of custody is the mechanism that transforms a log from a record of events into evidence of events. Each log entry is signed with a private key held by the logging infrastructure — not by the model runtime, not by the application, and not by any party who could have a conflict of interest in the event of a regulatory inquiry. The signature covers the full content of the log entry plus the hash of the immediately preceding entry, creating the chain structure that makes retroactive modification detectable.

Key management for the signing infrastructure is a critical dependency. The signing key must be stored in a hardware security module or equivalent — not in software, not on the same infrastructure as the logs themselves. Key rotation must be scheduled and auditable. And the public keys used for verification must be published and timestamped in a way that allows a regulator to verify a log signed two years ago using the key that was in effect at that time, even after the key has been rotated out.

Mapping to EU AI Act Requirements

The EU AI Act imposes specific logging requirements on high-risk AI systems that go beyond what most organisations currently capture. Article 12 requires that high-risk AI systems be designed and developed with capabilities enabling the automatic recording of events — specifically events relevant to identifying risks to the health, safety, or fundamental rights of affected persons. Article 13 requires that high-risk systems be transparent enough that deployers can interpret the system's output and use it appropriately. Article 17 requires providers to establish and implement a quality management system that includes record-keeping obligations.

Mapped to a concrete logging architecture, these three articles require: logs that capture events at sufficient granularity to reconstruct any specific inference and its context (Article 12); logs that include enough model state information to explain why a given output was produced (Article 13); and a documented, auditable process for log retention, access control, and evidence production (Article 17). An immutable I/O logging layer that captures all six data categories described above satisfies all three simultaneously.

Building Regulator-Ready Reports

A regulator-ready report is not a data export from the audit log — it is a structured narrative that maps specific log evidence to specific regulatory requirements, presented in a format that a non-technical regulatory reviewer can interpret without specialist assistance. The difference between a raw log export and a regulator-ready report is the difference between handing a regulator a database dump and handing them a documented answer to the question they actually asked.

Regulator-ready reports for GenAI systems should include four components. A system summary that describes the AI system, its intended purpose, its deployment context, and the regulatory framework under which it operates. An incident or period log that presents the relevant inference events in chronological order with full metadata, formatted for human readability. A policy enforcement summary that documents what rules were active during the period, how many events triggered each rule, and what actions were taken. And a chain of custody attestation that provides the cryptographic verification evidence demonstrating that the log is complete and unmodified.

Key Takeaways

- Immutability requires multiple independent controls — not just cryptographic chaining. Entry-point hashing, write-once storage, sequence integrity counters, and independently verifiable certification counts together provide stronger tamper evidence than any single mechanism alone.

- Complete I/O logging requires six data categories per inference event: request metadata, input content, model state, output content, policy enforcement events, and resource metrics.

- Logging must occur at the inference gateway layer, not the application layer. Gateway-layer logging cannot be bypassed by direct API calls; application-layer logging can.

- Tamper evidence does not require hardware security modules. A combination of entry-point hashing, write-once storage, and independently verifiable counters achieves the same regulatory outcome — log integrity that no single party can compromise undetected.

- EU AI Act Articles 12, 13, and 17 together require granular event capture, model state transparency, and documented retention processes — all satisfied simultaneously by a complete immutable I/O logging architecture.