Every AI model you run on a hyperscaler's infrastructure is a sovereignty risk. Not because the providers are malicious — but because the architecture itself creates dependencies that regulators, procurement teams, and enterprise security officers are increasingly unwilling to accept. Data processed outside your jurisdiction, on hardware you don't control, under terms of service that can change with 90 days notice, is not sovereign data. It is borrowed infrastructure with the illusion of ownership.

The Three Layers of Cloud Lock-In

Cloud AI lock-in operates at three layers simultaneously, and most organisations only recognise the problem at the most visible one. At the data layer, training datasets, vector stores, and fine-tuned model weights are held in proprietary formats on infrastructure you cannot replicate. At the API layer, inference endpoints, rate limits, and model versioning are controlled entirely by the provider — a model deprecation with 30 days notice can break production systems with no viable alternative. At the compliance layer, data residency guarantees offered by hyperscalers are contractual, not architectural. You are trusting a legal document, not a network topology.

"Sovereignty is not a feature you can subscribe to. It is an architectural property you either designed in from the start — or didn't."

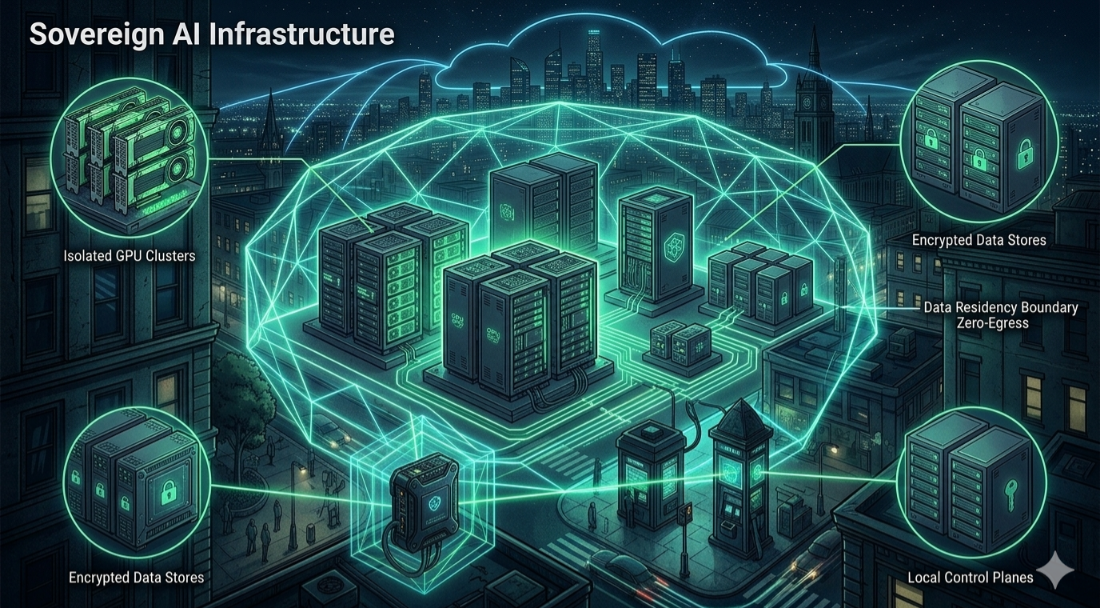

Designing the Sovereign Sandbox

A sovereign AI sandbox is not simply an on-premise server running an open-source model. It is a fully isolated compute environment with defined ingress and egress policies, cryptographic attestation of the execution environment, and audit trails that satisfy regulatory requirements without relying on a third party to generate them.

The architecture has four mandatory layers. The isolation layer uses hardware-level virtualisation — or bare metal — to ensure AI workloads cannot communicate with external networks except through explicitly defined, audited channels. The attestation layer uses TPM chips and secure boot to prove the integrity of the execution environment to any downstream compliance system. The storage layer maintains all model weights, training data, and inference logs in encrypted volumes whose keys never leave the physical boundary. The audit layer generates immutable, append-only logs of every inference request and response — the foundation of any regulator-facing explainability claim.

Local GPU Cluster Strategy

The economics of local GPU infrastructure have shifted dramatically. Three years ago, building a meaningful on-premise inference cluster required capital investment that only the largest enterprises could justify. Today, a four-node cluster of current-generation GPUs can serve production-grade inference for most enterprise LLM workloads at a total cost of ownership that beats equivalent cloud spend within 18 months — while eliminating data egress entirely.

- Inference vs. training separation: Local clusters should be optimised for inference, not training. Fine-tuning can run in an air-gapped batch process; real-time inference is where latency and data sovereignty intersect most critically.

- Cluster topology: A minimum of two nodes with failover capability. Single-node local GPU deployments are a sovereignty solution that becomes an availability problem the moment hardware fails.

- Model weight management: Weights must be versioned, signed, and stored in the same encrypted volume layer as training data. Unsigned or unversioned weights in production are an audit liability.

- Operational overhead: Factor in power density, cooling requirements, and rack space before committing to an architecture the physical facility cannot support. The sovereignty gain disappears if the cluster has a 60% uptime.

Compliance & Data Residency

Data residency requirements are no longer theoretical compliance concerns — they are active enforcement priorities. The EU AI Act, GDPR Article 46, India's Digital Personal Data Protection Act, and China's Data Security Law all impose constraints on where AI-processed personal data can reside and who can access it. A sovereign architecture answers these requirements architecturally, not with contractual assurances.

For organisations operating across multiple jurisdictions, a single centralised sovereign cluster is insufficient. A federated model — independent sovereign sandboxes per jurisdiction with no cross-border data flow, coordinated by a control plane that exchanges only model metadata and aggregated metrics — is the architecture that satisfies the strictest current and anticipated regulatory requirements.

Implementation Roadmap

Transitioning to a sovereign AI architecture is a phased programme, not a single migration. Organisations that attempt to move all AI workloads simultaneously typically create availability gaps that undermine the business case for the project entirely.

- Phase 1 — Inventory and classify: Map every AI workload by data sensitivity, regulatory jurisdiction, and cloud dependency. Without this, prioritisation is guesswork.

- Phase 2 — Sandbox highest-risk workloads first: Start with workloads processing the most sensitive data, not the most technically complex. Early compliance wins build organisational support for the broader programme.

- Phase 3 — Build and validate the local GPU layer: Provision, attest, and compliance-validate the inference infrastructure before migrating any workloads. A sovereign cluster that hasn't been validated is just on-premise cloud.

- Phase 4 — Migrate, audit, decommission: Move workloads with zero-downtime cutover strategies, generate the first round of sovereign audit trails, then formally decommission cloud dependencies — not before.

Key Takeaways

- Cloud lock-in is a three-layer problem — data, API, and compliance. Solving only one layer leaves the others as live vulnerabilities.

- Sovereignty is architectural, not contractual. Data residency clauses do not satisfy regulators who require architectural enforcement.

- Local GPU economics have shifted — a properly designed on-premise cluster reaches cost parity with cloud AI spend within 18 months for most enterprise workloads.

- Sandbox isolation must reach the storage layer. Network-only isolation is insufficient; encryption keys must never leave the physical boundary.

- Multi-jurisdiction deployments require a federated model — one sovereign sandbox per regulated jurisdiction, no cross-border data flow.